Question 201

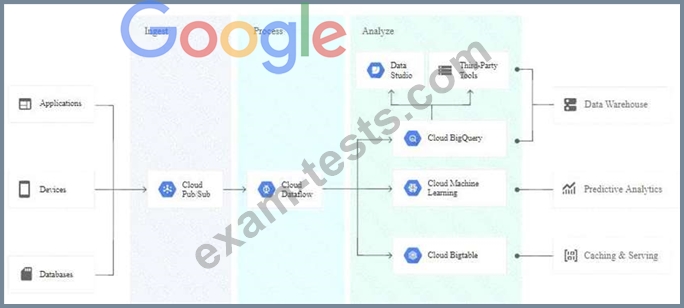

For this question, refer to the TerramEarth case study. You need to implement a reliable, scalable GCP solution for the data warehouse for your company, TerramEarth. Considering the TerramEarth business and technical requirements, what should you do?

Question 202

For this question, refer to the TerramEarth case study.

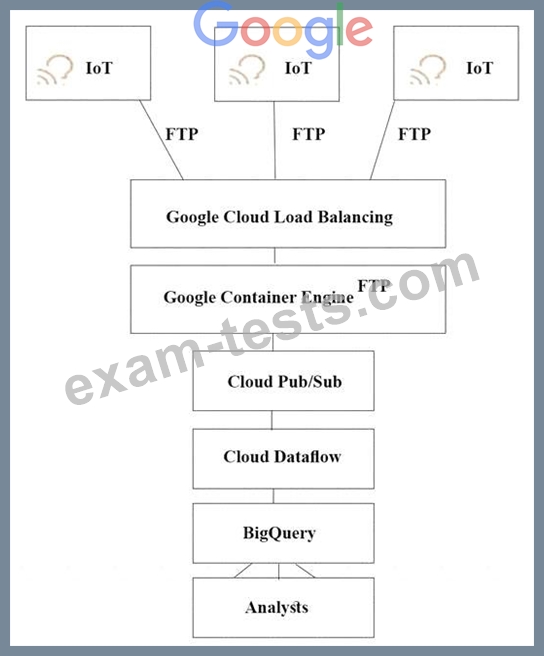

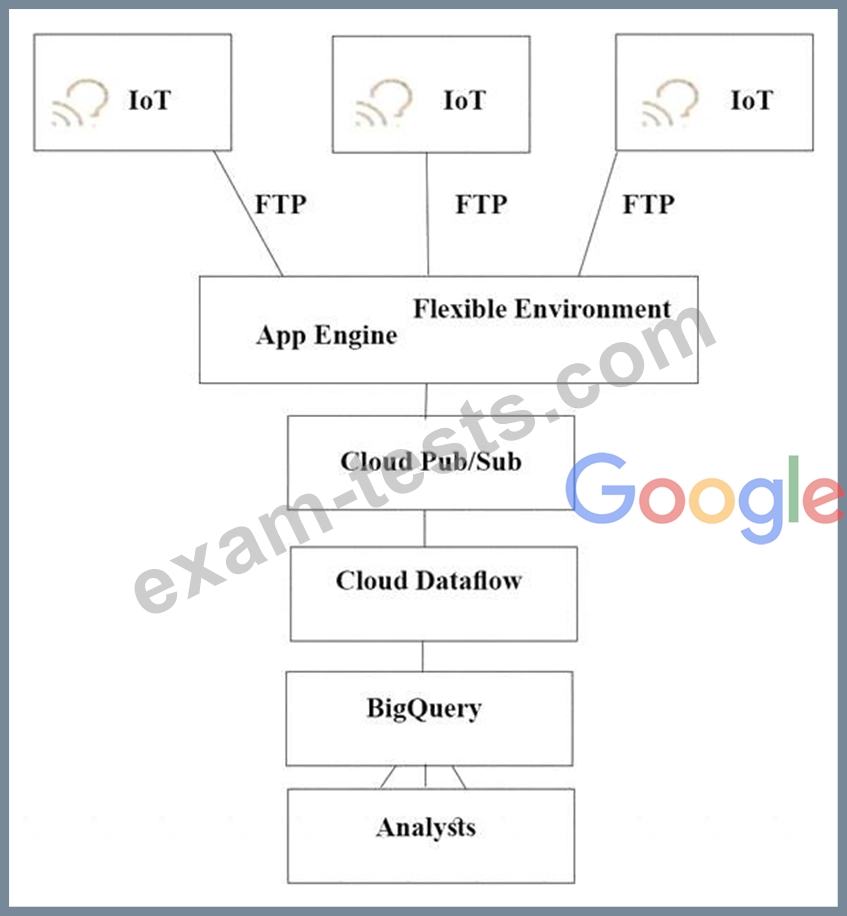

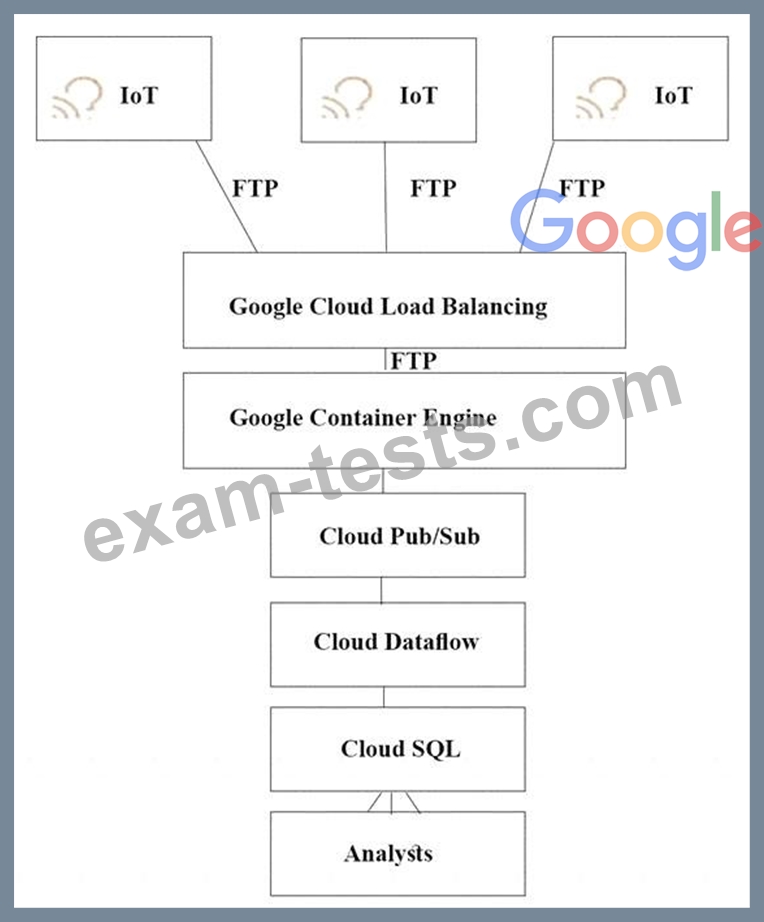

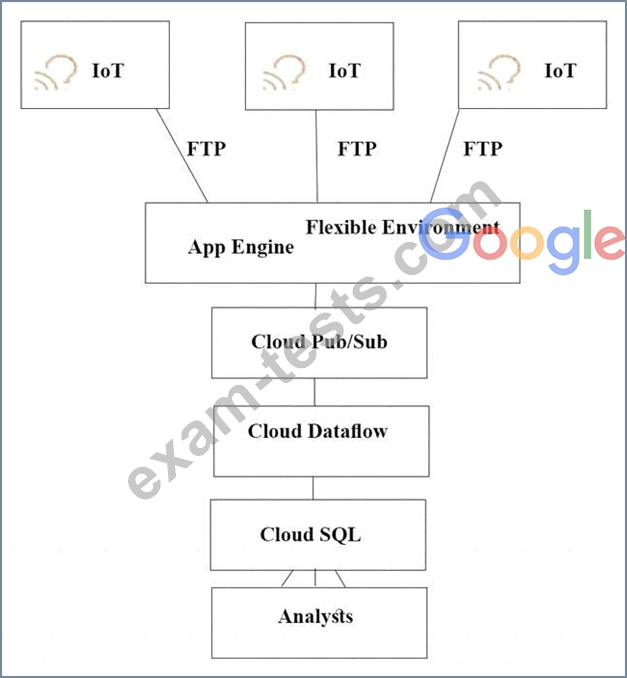

TerramEarth's CTO wants to use the raw data from connected vehicles to help identify approximately when a vehicle in the development team to focus their failure. You want to allow analysts to centrally query the vehicle data. Which architecture should you recommend?

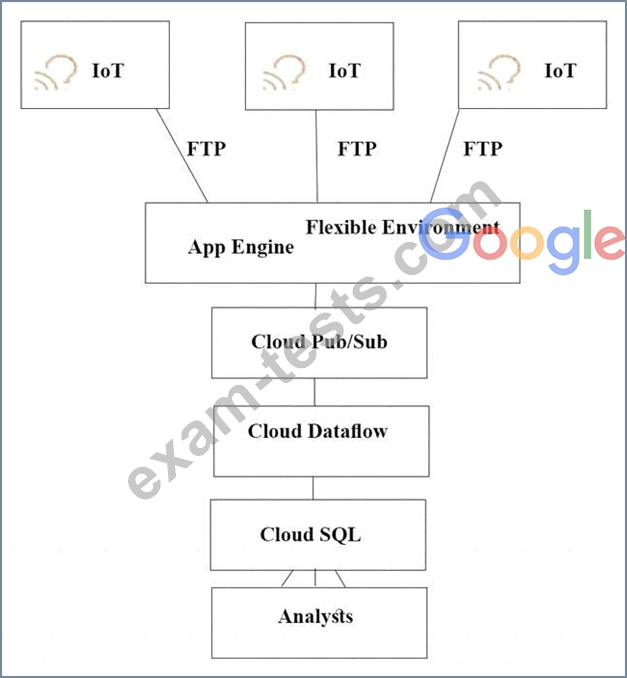

A)

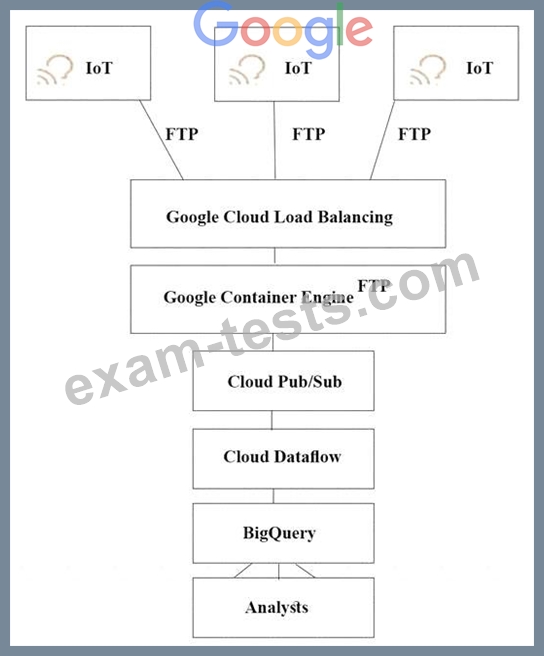

B)

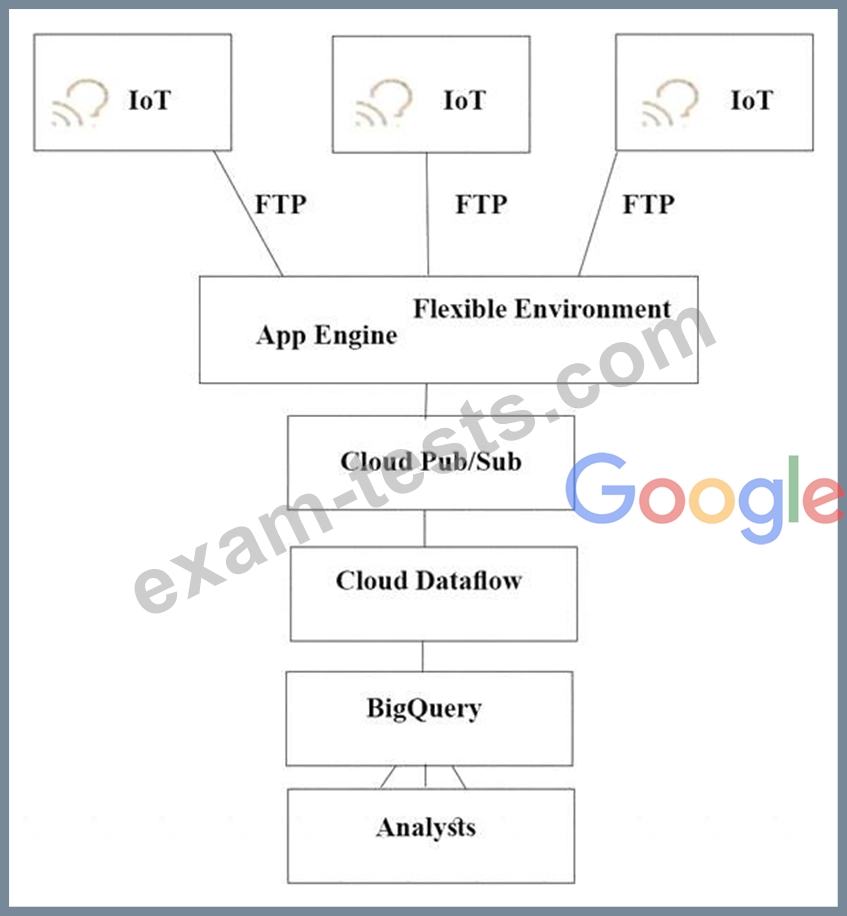

C)

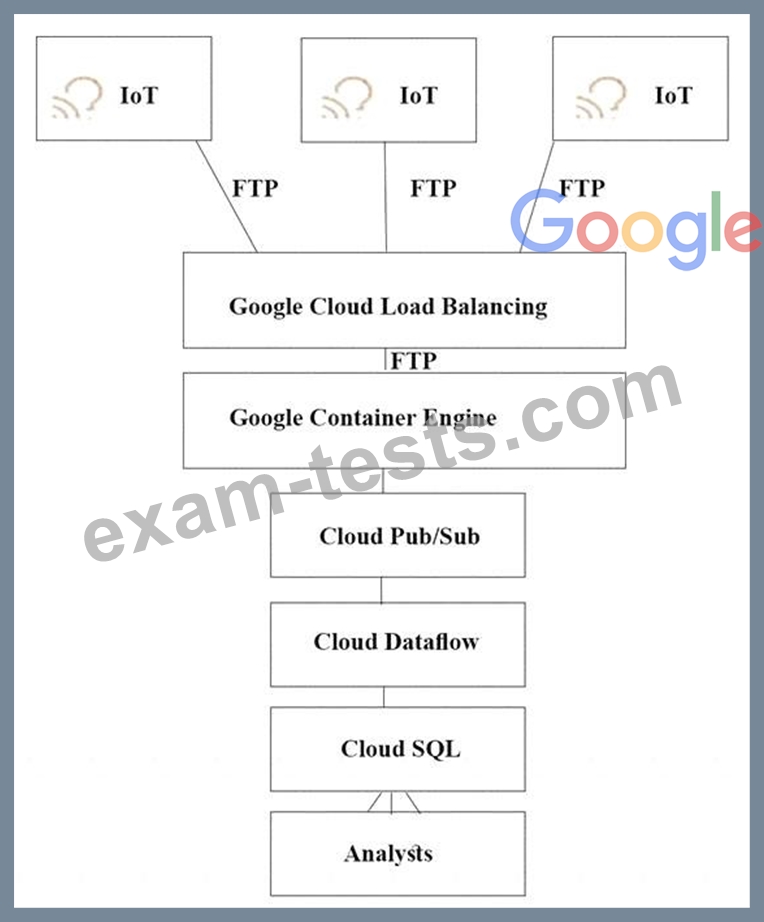

D)

TerramEarth's CTO wants to use the raw data from connected vehicles to help identify approximately when a vehicle in the development team to focus their failure. You want to allow analysts to centrally query the vehicle data. Which architecture should you recommend?

A)

B)

C)

D)

Question 203

For this question, refer to the Dress4Win case study.

Dress4Win has asked you for advice on how to migrate their on-premises MySQL deployment to the cloud.

They want to minimize downtime and performance impact to their on-premises solution during the migration.

Which approach should you recommend?

Dress4Win has asked you for advice on how to migrate their on-premises MySQL deployment to the cloud.

They want to minimize downtime and performance impact to their on-premises solution during the migration.

Which approach should you recommend?

Question 204

You are moving an application that uses MySQL from on-premises to Google Cloud. The application will run on Compute Engine and will use Cloud SQL. You want to cut over to the Compute Engine deployment of the application with minimal downtime and no data loss to your customers. You want to migrate the application with minimal modification. You also need to determine the cutover strategy. What should you do?

Question 205

For this question, refer to the TerramEarth case study. A new architecture that writes all incoming data to BigQuery has been introduced. You notice that the data is dirty, and want to ensure data quality on an automated daily basis while managing cost.

What should you do?

What should you do?